Breaking News

Popular News

Enter your email address below and subscribe to our newsletter

AI News

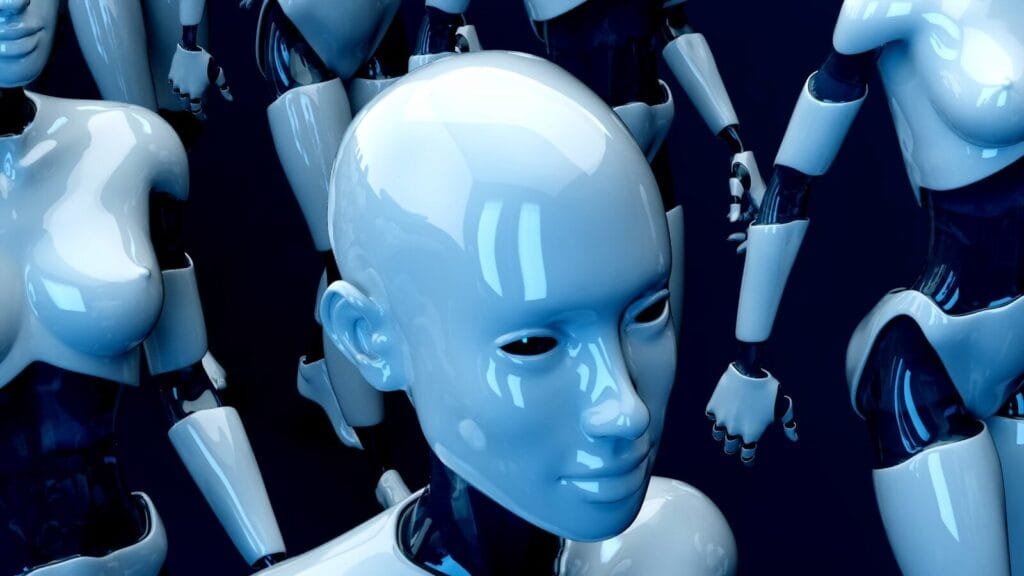

Artificial intelligence has unlocked remarkable creative capabilities—from generating realistic images and voices to producing high-quality video content. However, the same technology powering innovation is also enabling one of the most concerning digital threats of the decade: deepfakes.

In 2026, deepfakes are more sophisticated, accessible, and scalable than ever before. While AI-driven media generation has legitimate applications in entertainment, marketing, and education, it also presents significant risks to individuals, businesses, and governments.

This article examines the growing dangers of deepfakes and how AI companies are actively working to mitigate misuse.

Deepfakes are synthetic media—images, audio, or video—generated or altered using artificial intelligence to convincingly replicate real people’s appearances or voices.

They typically rely on:

The quality of deepfakes has improved dramatically, making them increasingly difficult to detect with the human eye or ear.

Voice-cloning tools can impersonate CEOs or finance officers to authorize fraudulent transfers. Businesses worldwide have already reported AI-generated voice scams targeting financial departments.

Deepfake videos can depict public figures saying or doing things that never occurred, potentially influencing elections or triggering social unrest.

Individuals may become victims of fabricated content that harms reputations or violates privacy. This includes non-consensual synthetic media.

Even authentic media can be dismissed as fake—creating a “liar’s dividend” effect where real evidence is questioned.

Leading AI developers recognize the risks and are implementing safeguards across multiple layers: technical, policy-based, and collaborative.

Companies are embedding invisible watermarks into AI-generated images, audio, and video to signal synthetic origin.

For example, OpenAI has explored watermarking techniques for generated content, while Google integrates digital provenance tracking in certain AI systems.

These mechanisms help platforms and investigators identify AI-generated media.

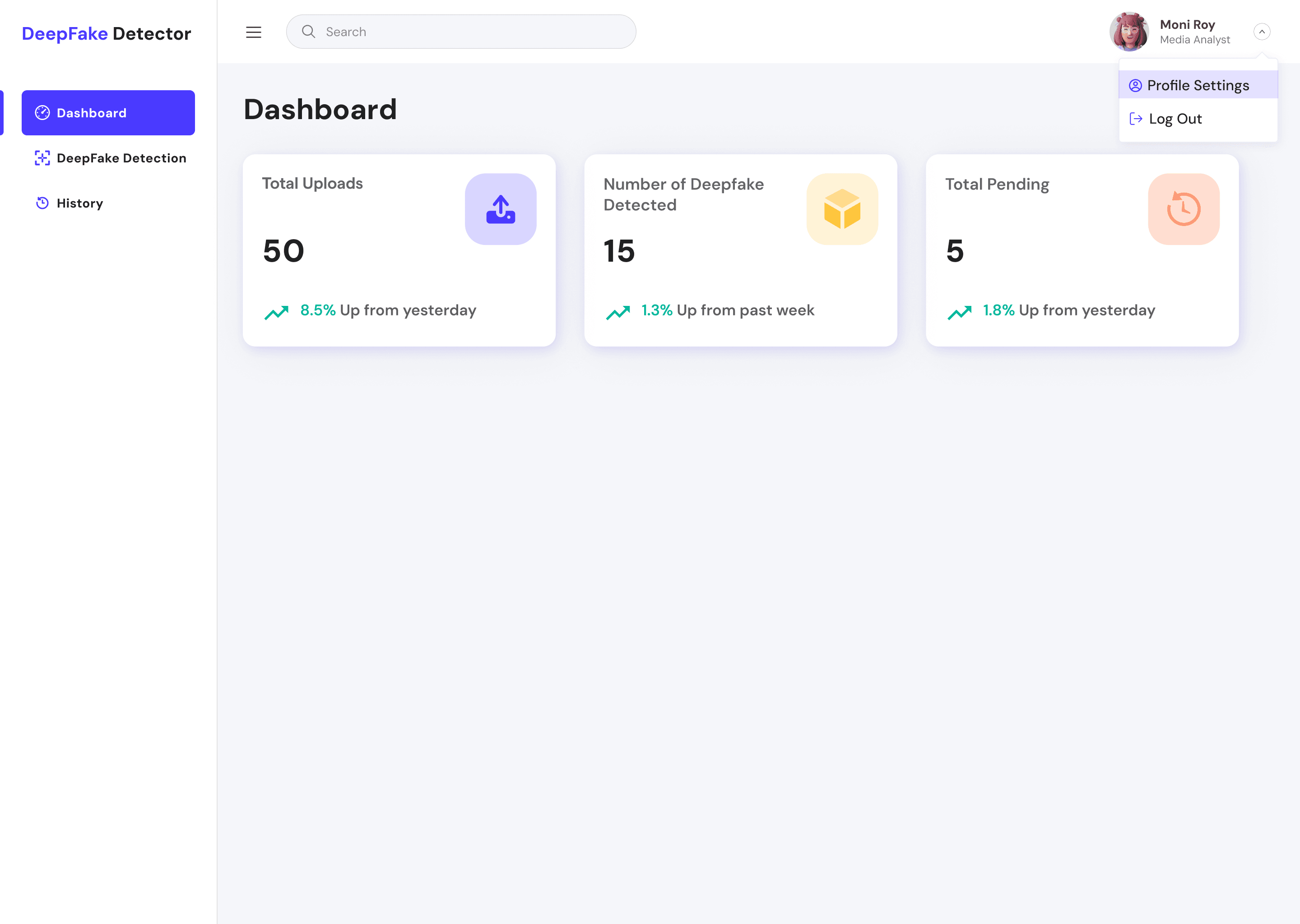

Ironically, AI is also the most powerful tool for detecting deepfakes.

Detection systems analyze:

Major technology firms like Microsoft and Meta Platforms are investing heavily in AI-driven authenticity verification.

AI platforms are tightening safeguards by:

These measures aim to reduce the likelihood of malicious misuse.

AI companies are increasingly working with policymakers to develop standards and regulations for synthetic media transparency.

Regulatory initiatives often focus on:

Public-private partnerships are becoming central to managing deepfake risks.

Social platforms are frontline defenders against deepfake spread.

They are implementing:

The speed at which misinformation spreads requires real-time response capabilities.

Deepfake risk mitigation is not limited to AI developers.

Education and awareness are essential defense layers.

In the coming years, expect:

As generative AI improves, the defense ecosystem must evolve equally fast.

Deepfake technology illustrates the dual-use nature of AI innovation. The same tools enabling creative expression and accessibility can also threaten trust, security, and democratic processes.

AI companies are responding with watermarking, detection systems, stricter access controls, and cross-industry collaboration. However, no single solution can eliminate the threat entirely.

Addressing deepfake risks requires coordinated efforts from AI developers, regulators, businesses, platforms, and users.

In the AI era, maintaining digital trust may become just as important as advancing technological capability.