Breaking News

Popular News

Enter your email address below and subscribe to our newsletter

AI News

Artificial intelligence is advancing rapidly—but so is global oversight.

By 2026, AI safety has become a central priority for governments, regulators, and technology companies worldwide. From foundation models and generative AI to autonomous systems and critical infrastructure, policymakers are introducing new rules to ensure AI systems are safe, transparent, and accountable.

As AI capabilities scale, so do concerns around misinformation, bias, data privacy, cybersecurity, and systemic risk. The global regulatory landscape is evolving quickly—and organizations deploying AI must now treat compliance as a strategic imperative.

This article explores the major AI safety regulations shaping 2026 and how companies are adapting to meet new policy standards.

Several factors have driven regulatory momentum:

Governments aim to strike a balance between fostering innovation and mitigating societal harm.

The European Union remains a global leader in AI regulation.

In 2026, the EU AI Act is transitioning from legislative approval into active implementation. The law adopts a risk-based framework, categorizing AI systems into:

High-risk AI systems—such as those used in hiring, credit scoring, or critical infrastructure—must comply with strict documentation, transparency, and human oversight requirements.

The EU model is influencing regulatory frameworks globally.

The United States has taken a sector-focused and risk-based approach to AI governance.

Recent policy developments include:

Agencies are increasingly evaluating AI systems for bias, cybersecurity vulnerabilities, and national security implications.

Major AI developers such as OpenAI, Microsoft, and Google are aligning internal safety testing and transparency processes with federal expectations.

China continues expanding AI regulations focused on:

AI-generated content often requires labeling under domestic rules.

These countries emphasize:

Emerging digital economies are adopting AI governance guidelines that prioritize innovation while integrating privacy and safety safeguards.

Across jurisdictions, common regulatory themes are emerging:

Organizations must assess whether their AI systems pose high societal or operational risk.

Clear documentation of:

High-risk AI systems must include human review processes.

Companies may be required to report significant AI failures or safety breaches.

AI-generated media may require watermarking or labeling to prevent deception.

Independent AI audits are becoming more common.

Enterprises are conducting:

Third-party certification may become a competitive advantage in highly regulated markets.

Despite progress, regulatory challenges remain:

Multinational companies must navigate complex cross-border requirements.

Forward-looking organizations are implementing:

AI safety is increasingly integrated into product development from the earliest design stages.

Looking ahead, policy trends may include:

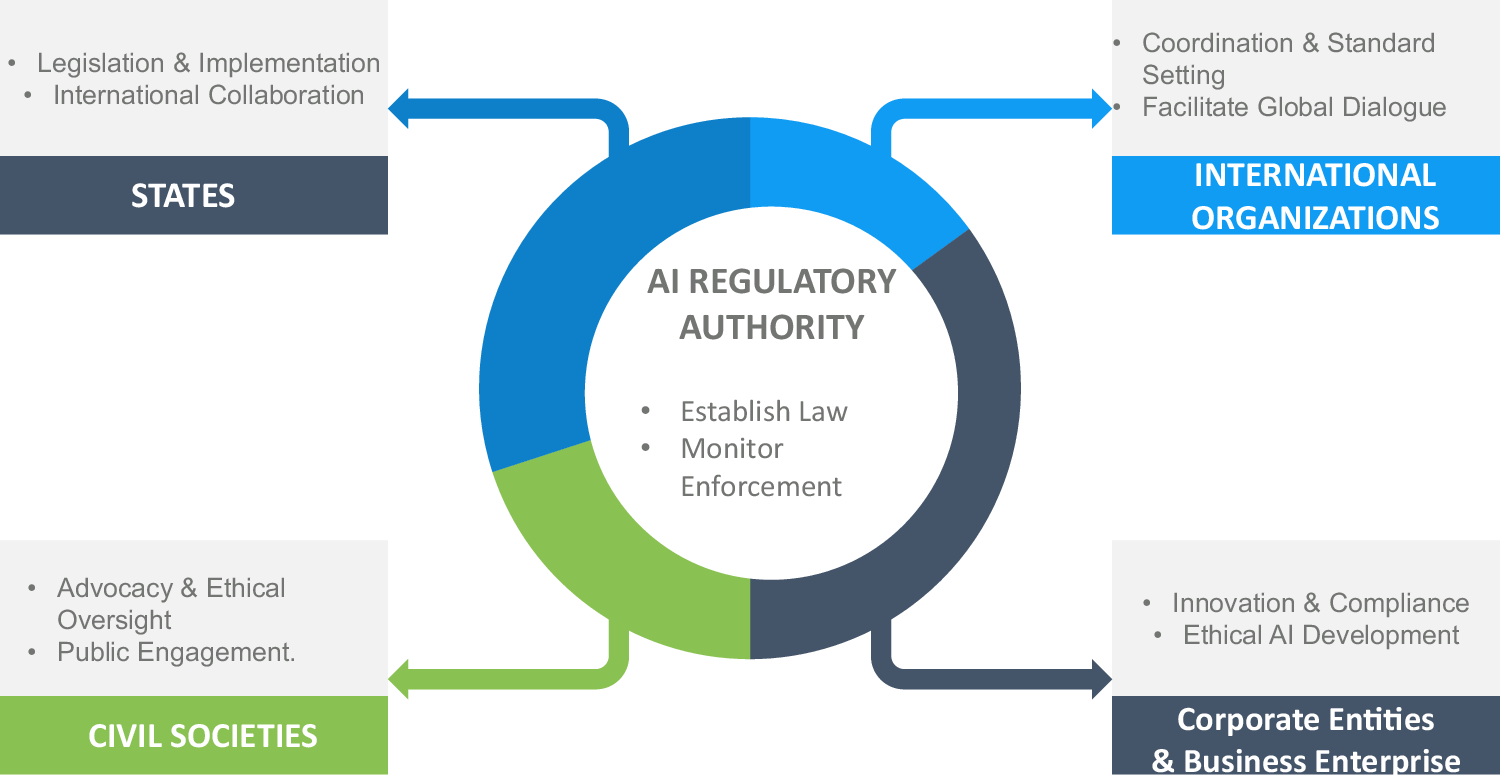

Global coordination will likely become more important as AI systems grow more powerful and interconnected.

AI safety in 2026 is no longer a theoretical discussion—it is a regulatory reality.

Governments worldwide are implementing structured frameworks to manage AI risk, ensure transparency, and protect public trust. For companies, compliance is not merely a legal obligation but a competitive differentiator.

Organizations that proactively integrate safety, governance, and accountability into their AI strategy will be better positioned to scale sustainably in an increasingly regulated global environment.

The future of AI innovation will depend not only on technological advancement—but also on responsible and policy-aligned deployment.