Breaking News

Popular News

Enter your email address below and subscribe to our newsletter

AI News

Artificial intelligence is transforming cybersecurity—but it is also transforming cybercrime.

In 2026, AI is being used on both sides of the digital battlefield. While organizations deploy AI to detect threats faster and automate defenses, malicious actors are leveraging the same technologies to create more sophisticated, scalable, and harder-to-detect attacks.

The result is a rapidly evolving threat landscape where traditional security strategies are no longer sufficient.

This article explores the emerging cybersecurity threats in the AI era and how businesses can adapt their defense strategies.

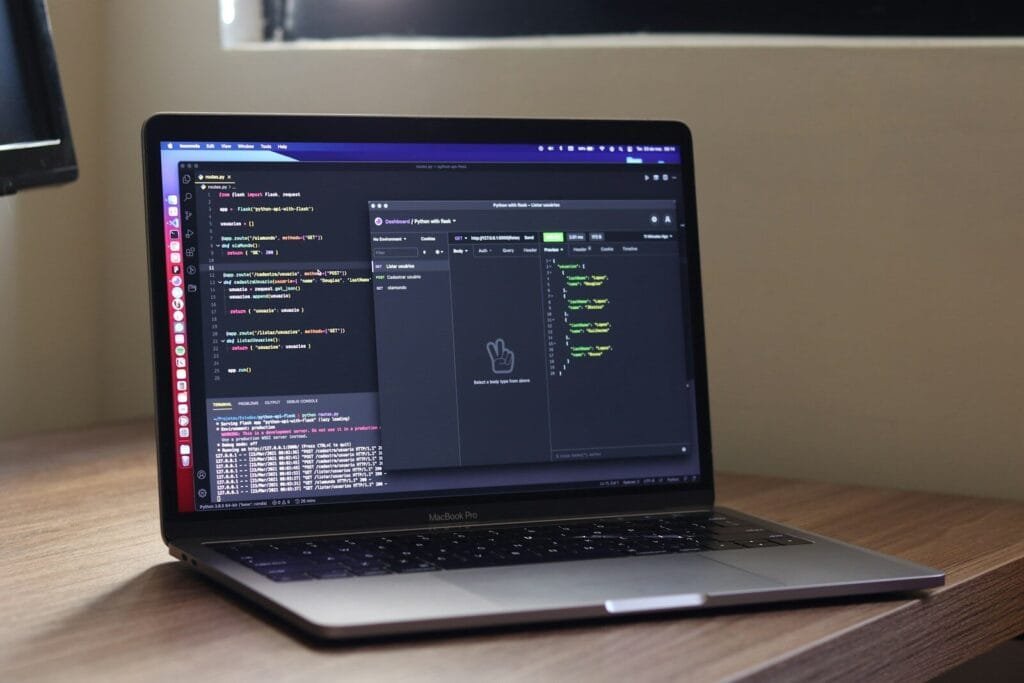

AI enables attackers to automate and enhance traditional hacking techniques. What once required significant technical skill can now be scaled with machine learning models and generative systems.

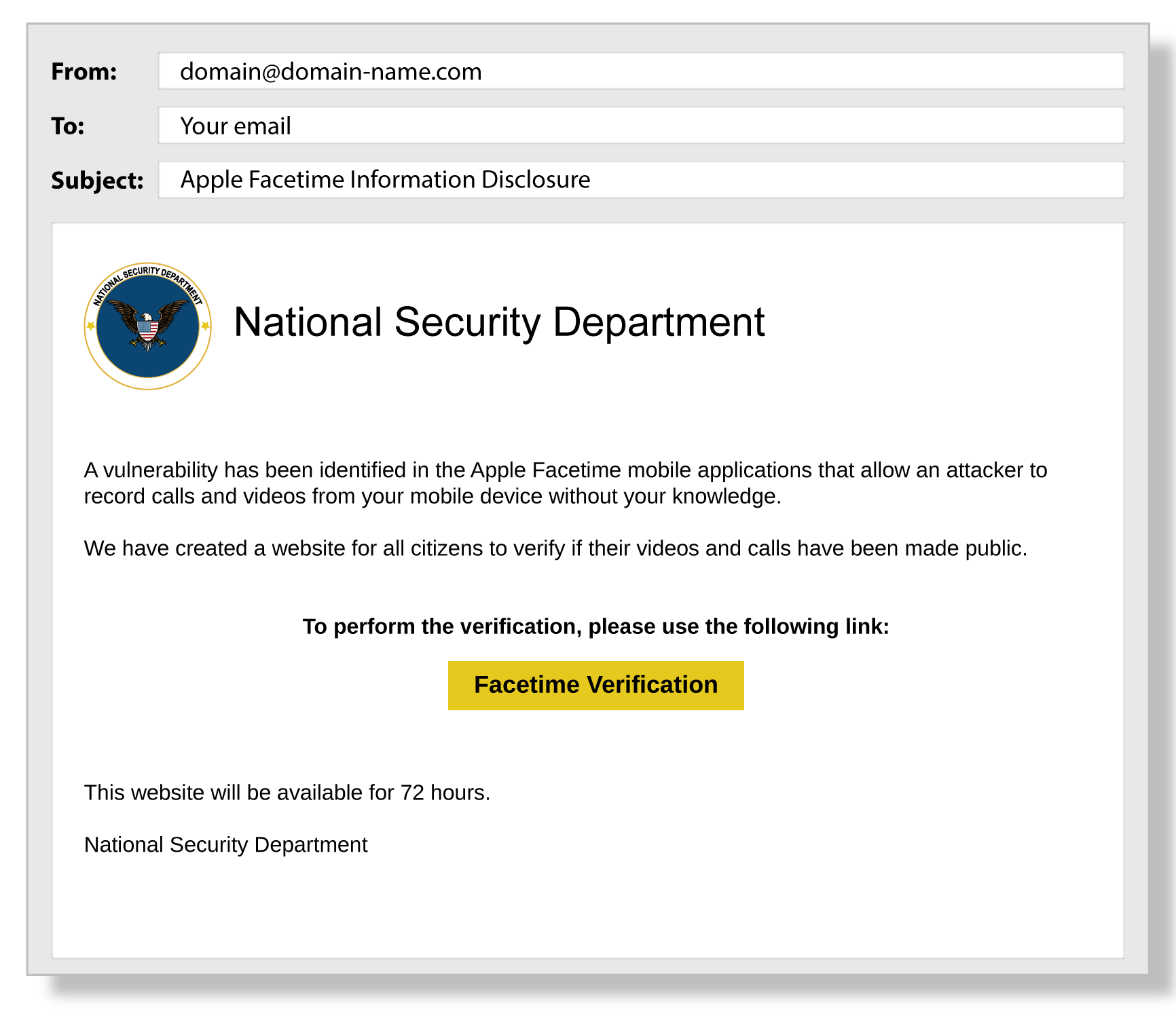

AI tools can generate highly convincing phishing emails, personalized at scale. By analyzing social media profiles, corporate communications, and public data, attackers can craft messages that mimic legitimate contacts.

Unlike earlier phishing campaigns filled with grammatical errors, AI-generated messages are polished and context-aware.

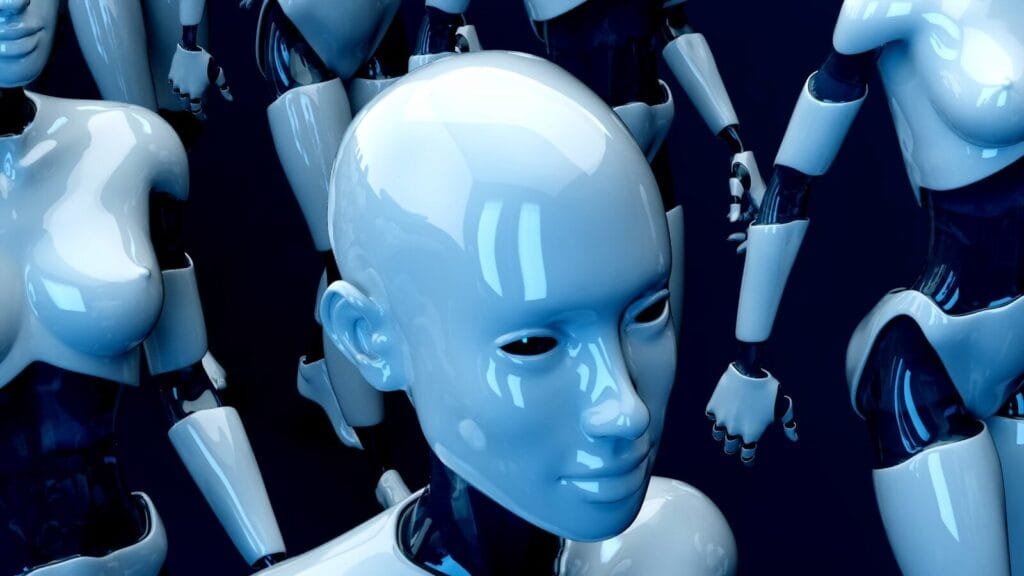

AI-generated deepfake videos and voice cloning tools are now capable of impersonating executives, public figures, or employees.

Emerging risks include:

Deepfakes significantly increase the effectiveness of social engineering operations.

Generative AI systems can assist in:

Although responsible AI developers implement safeguards, threat actors may attempt to bypass restrictions or use open-source tools.

AI systems themselves are becoming attack targets.

Threat vectors include:

Organizations deploying AI-powered chatbots or automation tools must secure these systems just like traditional infrastructure.

While AI introduces new threats, it also strengthens cybersecurity defenses.

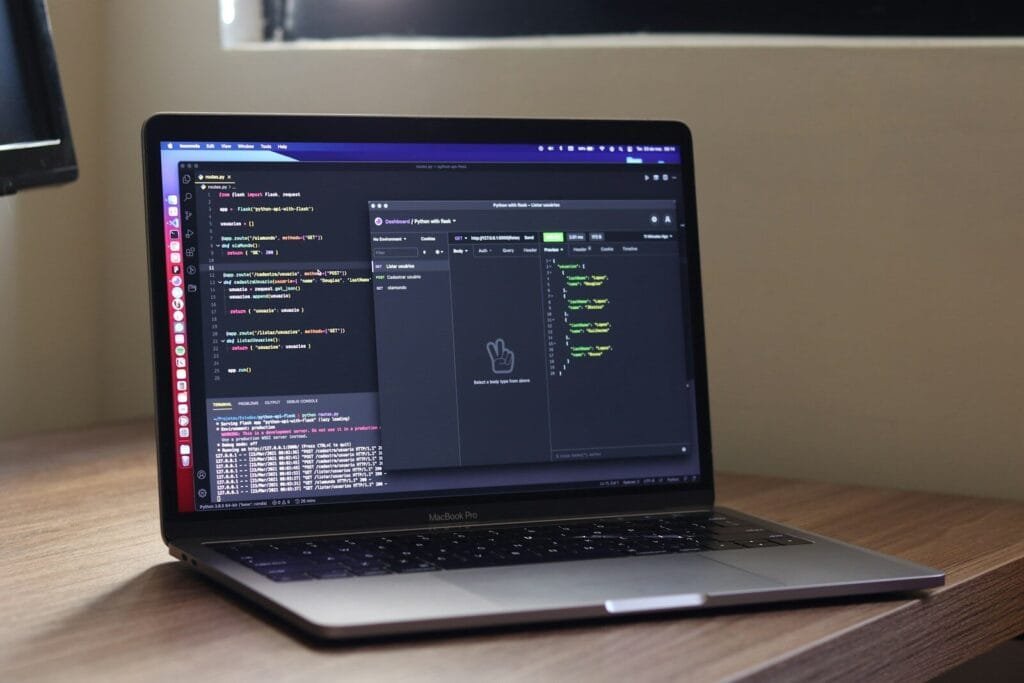

AI-driven security systems analyze massive data streams to detect anomalies and potential breaches faster than rule-based systems.

Machine learning can identify suspicious user behavior, such as:

This helps reduce insider threats and credential abuse.

AI systems can isolate compromised endpoints, block malicious IP addresses, and trigger containment protocols automatically.

Companies like Microsoft and Palo Alto Networks are integrating AI into advanced threat detection platforms.

Beyond corporate systems, AI also impacts national and societal cybersecurity.

Large-scale generative models can produce:

Organizations such as OpenAI implement content policies and safety testing to reduce misuse, but disinformation remains a global concern.

Organizations must treat AI security as a core governance priority.

To mitigate AI-era cybersecurity risks, organizations should:

Regularly test AI systems for vulnerabilities and misuse vectors.

Limit API access and implement strong authentication methods.

Track anomalies in AI outputs that may signal compromise.

Assume no user or system is inherently trustworthy without verification.

Security awareness programs must include deepfake and AI-generated phishing education.

Looking ahead, cybersecurity strategies will increasingly incorporate:

The cybersecurity landscape is no longer static—it evolves alongside AI capabilities.

The AI era introduces unprecedented innovation—but also unprecedented risk.

Cybercriminals are leveraging AI to scale attacks, automate deception, and exploit vulnerabilities faster than ever before. At the same time, AI-powered defense tools offer stronger detection, response, and resilience capabilities.

Organizations that proactively integrate AI security best practices will be better positioned to protect digital assets, customer trust, and operational continuity.

Cybersecurity in 2026 is no longer just about firewalls and antivirus software—it’s about securing intelligent systems in an increasingly intelligent threat environment.