Breaking News

Popular News

Enter your email address below and subscribe to our newsletter

AI News

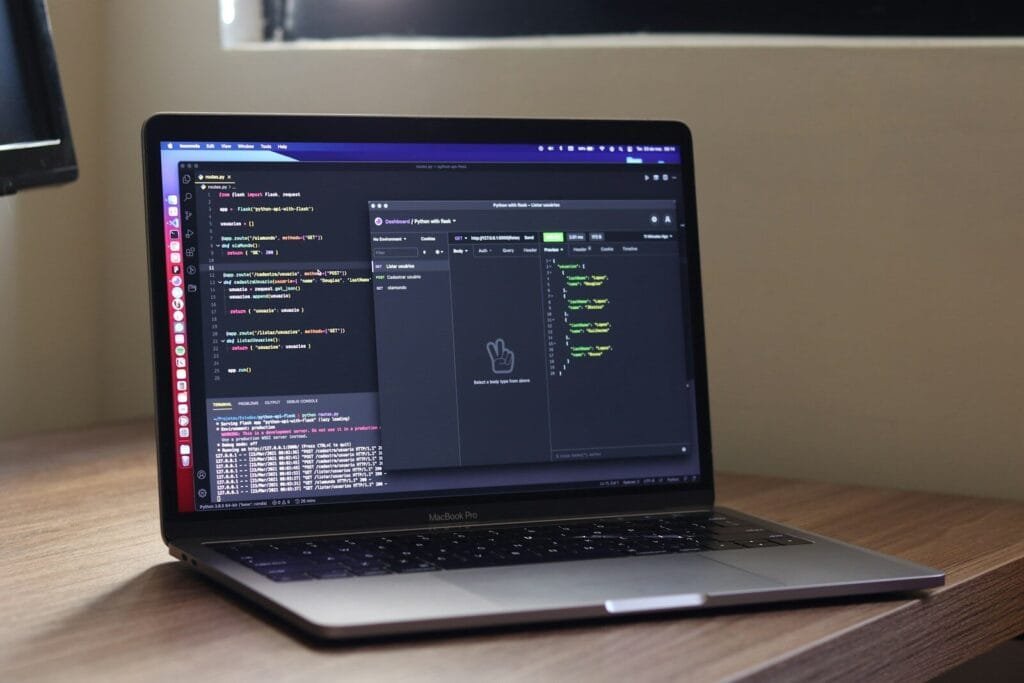

Artificial intelligence is advancing at unprecedented speed. From generative AI systems to autonomous decision-making platforms, AI technologies are now embedded across finance, healthcare, education, cybersecurity, and enterprise software.

However, as AI capabilities grow, so do concerns around bias, privacy, misinformation, safety, and accountability. Responsible AI development is no longer optional—it is a strategic, regulatory, and ethical necessity.

In 2026, leading technology companies and research organizations are adopting structured frameworks to ensure AI systems are safe, fair, transparent, and aligned with societal values. This article explores the industry’s best practices for responsible AI development and why they matter for long-term innovation.

Responsible AI refers to the design, development, deployment, and governance of AI systems in ways that:

Organizations such as OpenAI, Microsoft, and Google DeepMind have formalized responsible AI frameworks to guide internal development and public deployment.

AI systems must function reliably under expected and unexpected conditions.

Best practices include:

Safety evaluations are particularly critical for large language models and multimodal systems.

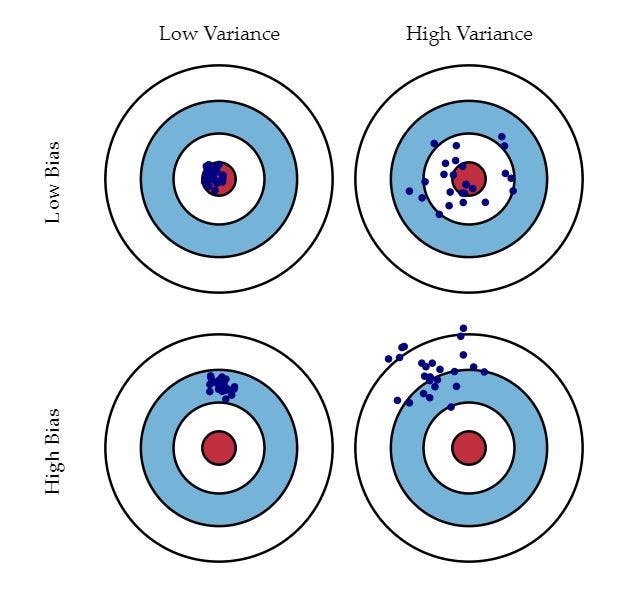

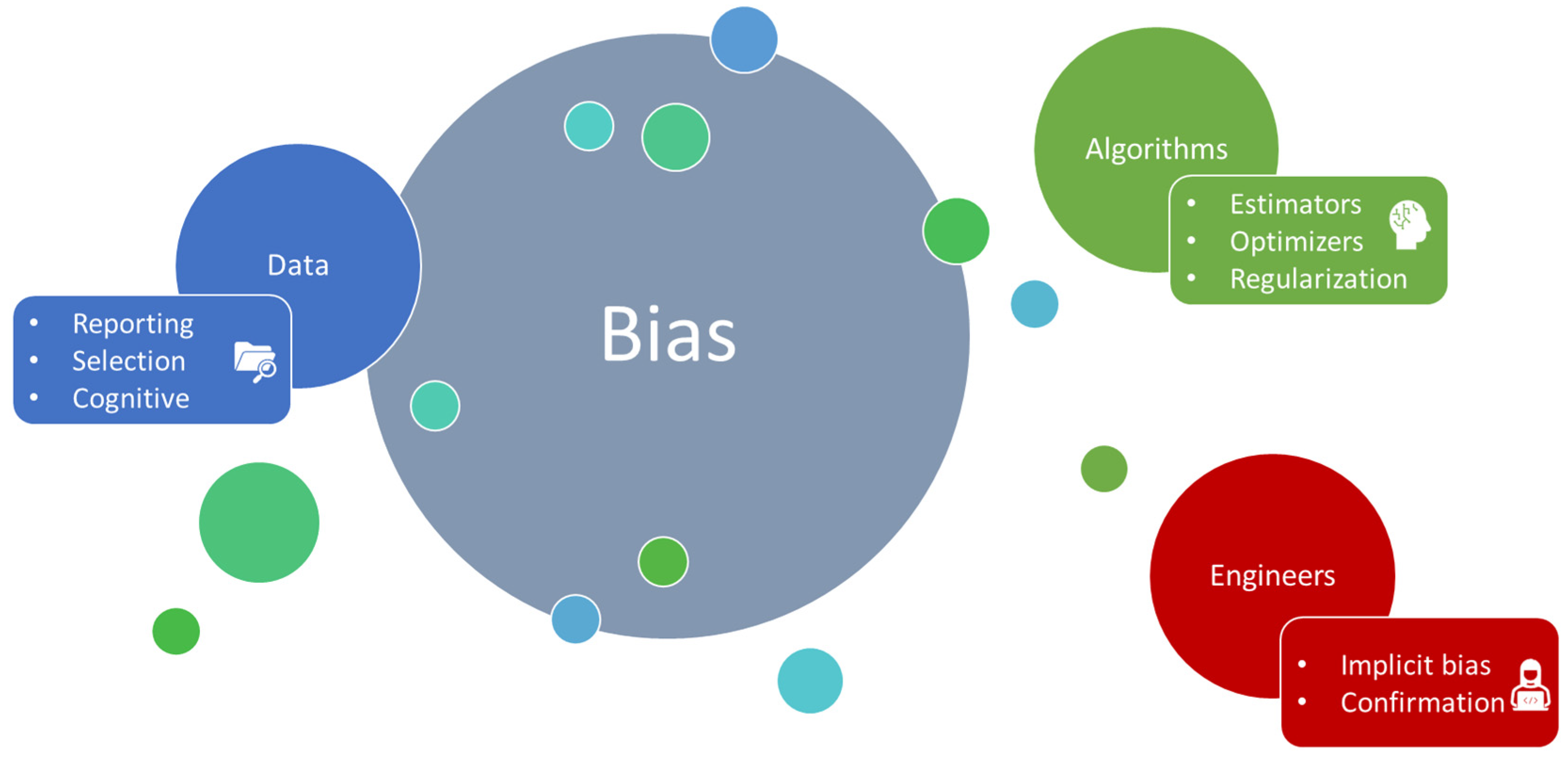

AI models learn from data—and data often contains historical biases.

Industry approaches include:

Addressing bias early in the lifecycle reduces downstream harm.

Users and regulators increasingly demand clarity about how AI systems function.

Responsible AI frameworks emphasize:

Transparency builds trust and reduces regulatory risk.

AI systems often rely on large-scale data processing. Privacy-first architecture is now standard best practice.

Key measures include:

Strong governance frameworks reduce legal exposure and protect user trust.

Fully autonomous systems in high-risk areas require oversight.

Best practices include:

Accountability mechanisms ensure that responsibility does not become diffused across complex AI systems.

Global regulatory frameworks are shaping responsible AI standards:

Companies operating internationally must align AI deployment with evolving compliance requirements.

Regulatory readiness is increasingly viewed as a competitive advantage.

Generative AI introduces unique challenges:

Leading AI developers mitigate these risks through:

Balancing creativity with safety remains one of the industry’s most complex challenges.

Responsible AI is not just an ethical initiative—it has direct financial implications:

In 2026, institutional investors increasingly evaluate governance and AI risk management as part of due diligence.

Looking ahead, responsible AI development is evolving toward:

Sustainability is also becoming part of responsible AI, as large-scale model training consumes significant energy.

AI innovation is reshaping industries—but trust determines its long-term success. Responsible AI development ensures that technological advancement aligns with ethical principles, regulatory standards, and societal expectations.

Organizations that embed safety, fairness, transparency, and accountability into their AI strategies are better positioned for sustainable growth.

As AI systems become more powerful and autonomous, responsible development is not simply best practice—it is foundational to the future of intelligent technology.